Queues (FIFO): The Ultimate Buffer Data Structure for Conquering Hardware Chaos

Introduction: The Intelligent Waiting Room of Embedded Systems

Hello engineers and fellow developers at www.123microcontroller.com! Today, the dark-eyed engineer returns to dissect another fundamental Data Structure that operates as the ultimate “Waiting Room” for commanding information flow within embedded systems: The mighty Queue.

In the unforgiving realm of hardware architecture, data rarely arrives on schedule. Telemetry flooding in from asynchronous sensors, incoming byte streams from a UART serial port, or concurrent tasks colliding within an RTOS—if your firmware fails to process them at identical speeds, raw data simply obliterates off the silicon. The Queue structure was specifically architected to conquer this exact crisis. Today, we delve deeply into how high-level structural algorithms define the Queue (FIFO) and why it acts as a critical lifeline for hardware synchronization!

The Philosophy and Execution of Queues

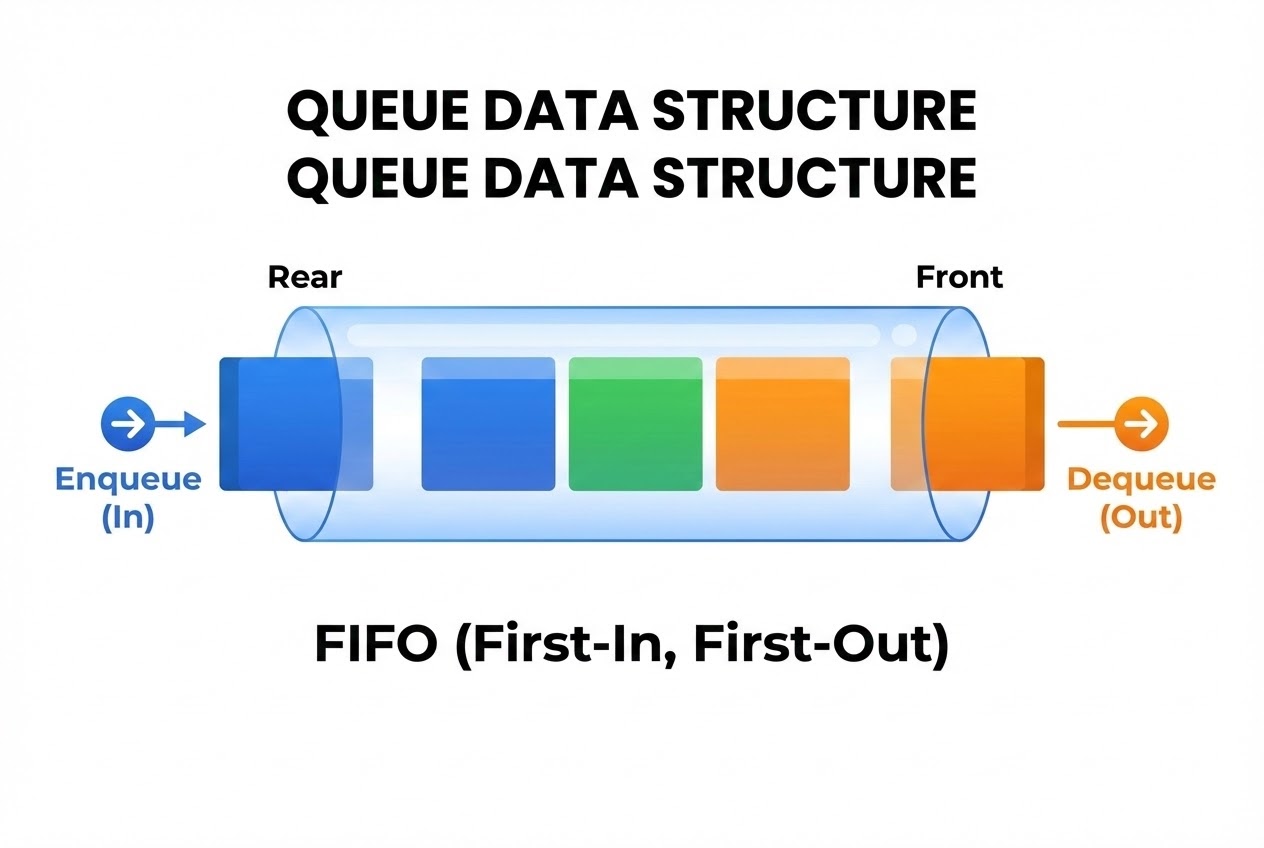

Within the taxonomy of Data Structures, the Queue is classified strictly as a Linear Data Structure, characterized by aggressively segregating its insertion and extraction vectors onto opposing polar ends. Its unyielding operational law is First-In, First-Out (FIFO). This is the exact architectural inversion of the Stack (LIFO).

Conceptually, a Queue functions identically to a movie theater ticket line or a tollbooth lane. The absolute first packet of data that queues up is guaranteed to be serviced first. Any newly arriving telemetry is unconditionally banished to the rear.

The Two Prime Directives (Operations):

A Queue’s survival hinges on two fundamental algorithmic actions:

- Enqueue: The tactical injection of an incoming data payload explicitly onto the absolute “Rear” (or Tail) of the chain.

- Dequeue: The systematic extraction and demolition of the primary data payload resting on the absolute “Front” (or Head).

Structural Implementation Strategies:

Engineering blueprints dictate three dominant architectures to construct a Queue, possessing specific tactical trade-offs:

- The Linear Array Queue: The most primitive methodology. However, it harbors a devastating flaw: as you extract data from the Front (Dequeue), the abandoned memory locations transition into permanent “Dead Space”. Re-purposing this dead RAM demands burning CPU cycles to physically Shift all remaining elements forward, making it wholly unfit for high-speed bare-metal applications.

- The Circular Queue (Ring Buffer): This is the undisputed Standard Heavyweight in Embedded Systems! To eradicate Dead Space entirely, engineers architect the Array so its trailing edge physically wraps back around to intersect its origin (Index 0). When the writing Pointer hits maximum silicon capacity, it dynamically “Wraps Around” seamlessly. This operation is conventionally mathematically calculated using Modulo (

%) division. - The Linked List Queue (Dynamic Array): Constructed by chaining detached Nodes deployed via Dynamic Memory (

malloc). This architecture infinitely expands mirroring active data flow—until total RAM exhaustion. To govern it efficiently, it rigidly insists on anchoring two isolated Pointers: aFrontlocking onto the alpha node, and aRearpermanently monitoring the omega node, granting O(1) latency for all actions.

The Overwhelming Importance of Queues in Hardware:

- Peripheral Hardware FIFOs: Exchanging data streams via UART or SPI would cripple a CPU if it were forced to software bit-bang every cycle. Modern microcontrollers possess micro-circuit FIFOs baked physically into the silicon. They ingest raw signal telemetry, hoard it, and unleash a Hardware Interrupt only when the Queue bridges a specific threshold (e.g., Half-Full), offloading massive strain from the core processor.

- Message Queues (IPC): Dominating Operating Systems (OS) and inter-process communications (IPC), software-defined Queues operate as armored intermediaries. They funnel telemetry asynchronously between disconnected parallel Tasks, structurally managed directly by the kernel.

- Priority Queues: In mission-critical environments, Queues are architecturally modified so that incoming payloads carrying “Maximum Priority Classification” aggressively bypass the entire line and execute a forced VIP Dequeue—mechanically similar to emergency trauma triage logic in a hospital.

Code Example: Architecting a Static Circular Queue

Let’s dissect the Circular Queue (Ring Buffer) deployed via an Array. This represents the absolute holy grail for Embedded hardware buffers because caching static memory at compile-time violently eliminates any threat of a Dynamic Memory Leak.

#include <stdio.h>

#include <stdbool.h>

#define QUEUE_SIZE 8

/* Blueprinting the Circular Queue Architecture */

typedef struct {

int data[QUEUE_SIZE];

int front;

int rear;

int count; // Monitoring active payloads internally expedites Full/Empty assertions

} CircularQueue;

/* Initializer protocol to zero-out the structure */

void initQueue(CircularQueue *q) {

q->front = 0;

q->rear = 0;

q->count = 0;

}

/* Validation Protocol: Scanning for architectural maximum capacity */

bool isFull(CircularQueue *q) {

return (q->count == QUEUE_SIZE);

}

/* Validation Protocol: Scanning for total logic void */

bool isEmpty(CircularQueue *q) {

return (q->count == 0);

}

/* 1. Enqueue Operation: Ram data packet into the Rear sector */

bool enqueue(CircularQueue *q, int value) {

if (isFull(q)) {

printf("CRITICAL ALARM: Queue Overflow! Aborting enqueue for %d\n", value);

return false;

}

// Inject packet and calculate the next cyclic coordinate using Modulo wrapping

q->data[q->rear] = value;

q->rear = (q->rear + 1) % QUEUE_SIZE;

q->count++;

return true;

}

/* 2. Dequeue Operation: Extract processing payload from the Front sector */

int dequeue(CircularQueue *q, bool *success) {

if (isEmpty(q)) {

printf("CRITICAL ALARM: Queue Underflow! No payload found.\n");

*success = false;

return -1;

}

// Intercept payload and mathematically advance the Front pointer circularly

int value = q->data[q->front];

q->front = (q->front + 1) % QUEUE_SIZE;

q->count--;

*success = true;

return value;

}

int main(void) {

CircularQueue q;

initQueue(&q);

enqueue(&q, 10);

enqueue(&q, 20);

enqueue(&q, 30);

bool ok;

printf("Dequeued Component: %d\n", dequeue(&q, &ok)); // Extracts 10 first (FIFO)

printf("Dequeued Component: %d\n", dequeue(&q, &ok)); // Extracts 20 second

return 0;

}

Security Protocols to Defeat Queue Dereferencing

Managing C-language Queues demands extreme structural paranoia. Defend your firmware architecture against execution meltdowns utilizing these parameters:

- Unconditionally Intercept Overflows and Underflows: Preceding any

Enqueuestrike, you Must execute an algorithmic bounds-check (Queue Full verification) to definitively block obliterating unread legacy payload data. Defensively mirroring this, prior to aDequeue, scan if the line is an Empty void (Queue Underflow) to prevent dragging “Garbage Addresses” into critical logic pathways. - Execute High-Velocity Modulo Arithmetic: Forcing the CPU to compute

index % QUEUE_SIZEusing software division cycles absolutely wrecks execution speeds on hardware lacking hardware dividers. Deep Embedded hacker trick: Strictly enforceQUEUE_SIZEinto strict base-2 exponents (8, 16, 256) and deploy brutal Bitwise AND masking (&) instead of%(e.g.,index = (index + 1) & (QUEUE_SIZE - 1);). The processing speed upgrade is astronomical. - Preventing Memory Leak Contagion: If you are forced to deploy a Linked List

mallocQueue, your primary directive during aDequeuesequence is universally triggeringfree()on the severed Node. Defecting on this duty guarantees a fatal Memory Leak, starving the board’s RAM to the absolute zero-point. - Terminating Zombie Pointers (Dangling Pointers): Whenever your algorithmic system drains a Linked Queue completely dry (Empty state), explicitly command the orphaned

frontandrearpointers to crash back toNULL. Retaining floating coordinates to non-existent memory blocks invites highly disastrous Undefined Behaviors.

Conclusion

The Queue (FIFO) presents a beautifully simplistic architecture driving a staggeringly potent processing engine. It is the absolute cornerstone of fabricating secure buffering matrices designed to intercept wildly asynchronous signal deluges—encompassing internal Hardware FIFOs, Direct Memory Access (DMA) conduits, and vast RTOS Message Queues. Committing the Circular Buffer technique to muscle memory implies transitioning from an amateur developer into a hardened firmware engineer capable of handling extreme data throughput.

If you are eager to weaponize a Circular Queue executing high-speed, interrupt-driven sensor acquisitions, or attempting cross-task thread syncing utilizing an RTOS, jump into our engineering forums at www.123microcontroller.com! Stand by for the next brutal structural breakdown. Happy Coding!